If your analytics delivery cycle is measured in months, decision-making will inevitably move outside the analytics function.

I’ve sat in many meetings where an analytics project is being discussed like a construction project.

- Timelines stretch into months.

- Dependencies are mapped.

- Data pipelines are debated.

- Dashboards are envisioned long before a single decision is clarified.

And somewhere in the middle of all this, a simple question gets lost:

What decision are we trying to make and when do we need to make it?

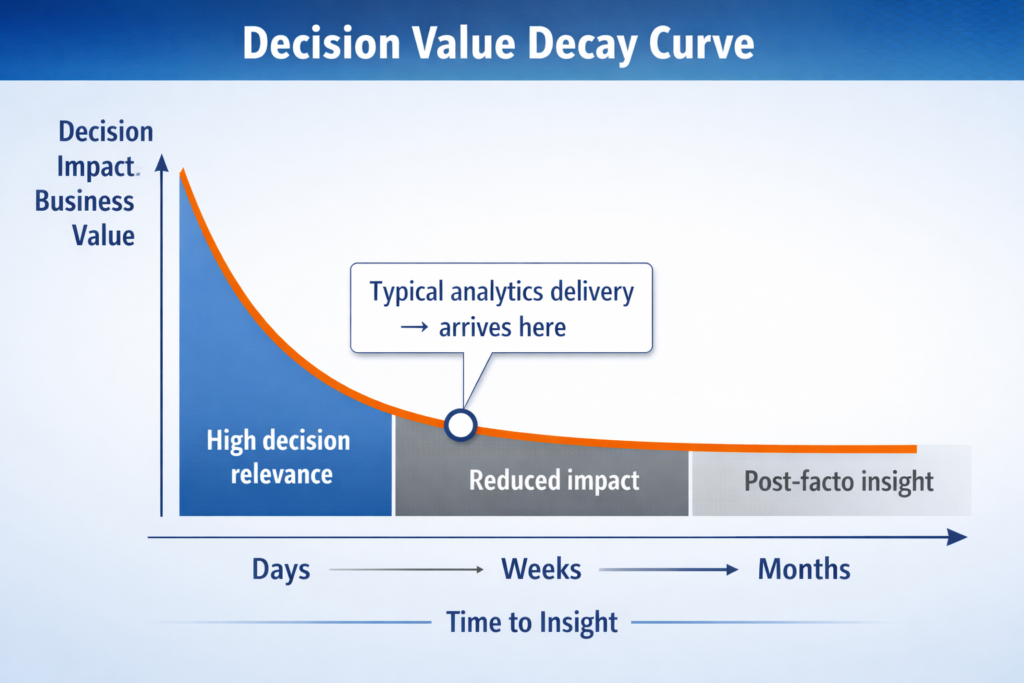

Because if we are honest, most business decisions cannot wait for three months. They happen in days. Sometimes in hours.

Yet, analytics function meant to support those decisions, often arrives late. Well-built, technically sound, visually polished… and irrelevant.

This is not a capability gap. This is a design problem.

The Cost No One Measures

When analytics takes months, the damage is rarely visible in a dashboard. It shows up elsewhere.

Decisions get delayed, or worse, they get made without data. Opportunities pass quietly because the insight came too late. Business teams stop waiting and start working around analytics.

You begin to see the signs:

- Excel models resurface

- Gut-based decisions become acceptable again

- “We’ll validate later” becomes the default approach

Over time, something more subtle happens – trust erodes.

Not because analytics is wrong, but because it is late.

And in decision-making, timing is not a constraint. It is part of accuracy.

An insight delivered after the decision is made is not just useless, it is misleading. It creates the illusion that the organization is data-driven, while in reality, decisions are being made elsewhere.

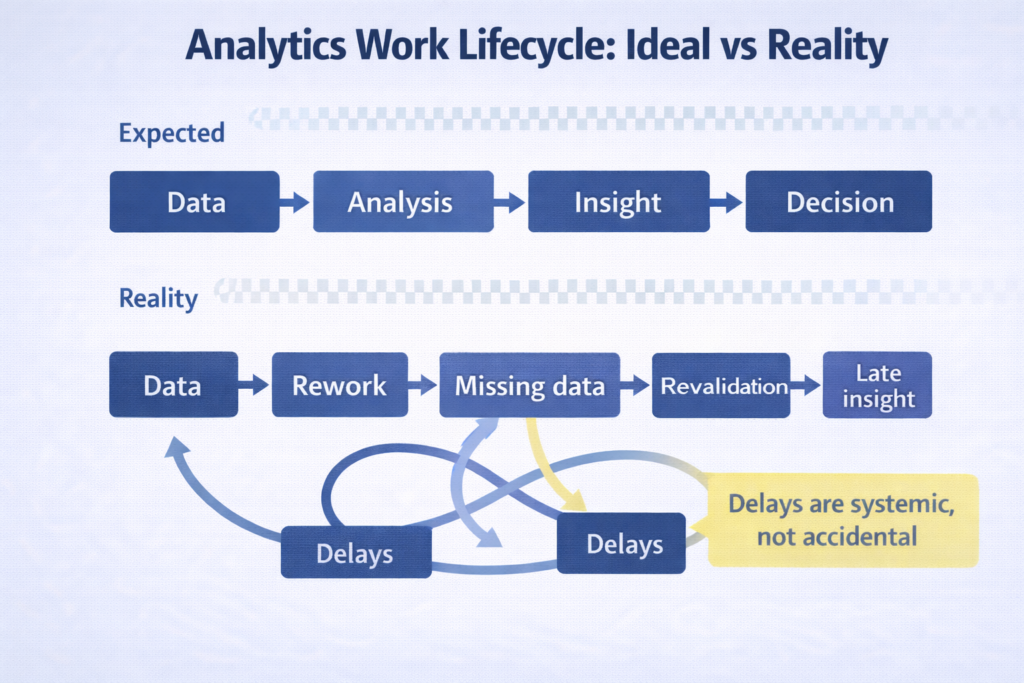

Why Do Analytics Projects Drift?

I don’t think organizations intend for analytics to become slow. It just happens gradually and almost invisibly.

It usually starts with good intent.

“We should do this properly.”

“Let’s make sure the data is complete.”

“Since we are building this, let’s also include…”

And before long, a focused problem turns into an expanding project.

A few patterns show up consistently.

First, scope quietly explodes. What begins as a pricing analysis becomes a full-scale pricing engine. A customer segmentation exercise turns into a 20-variable clustering model with no clear use case.

Second, the data perfection trap kicks in. There is always one more dataset to include, one more inconsistency to resolve, one more validation to run. The pursuit of completeness becomes endless.

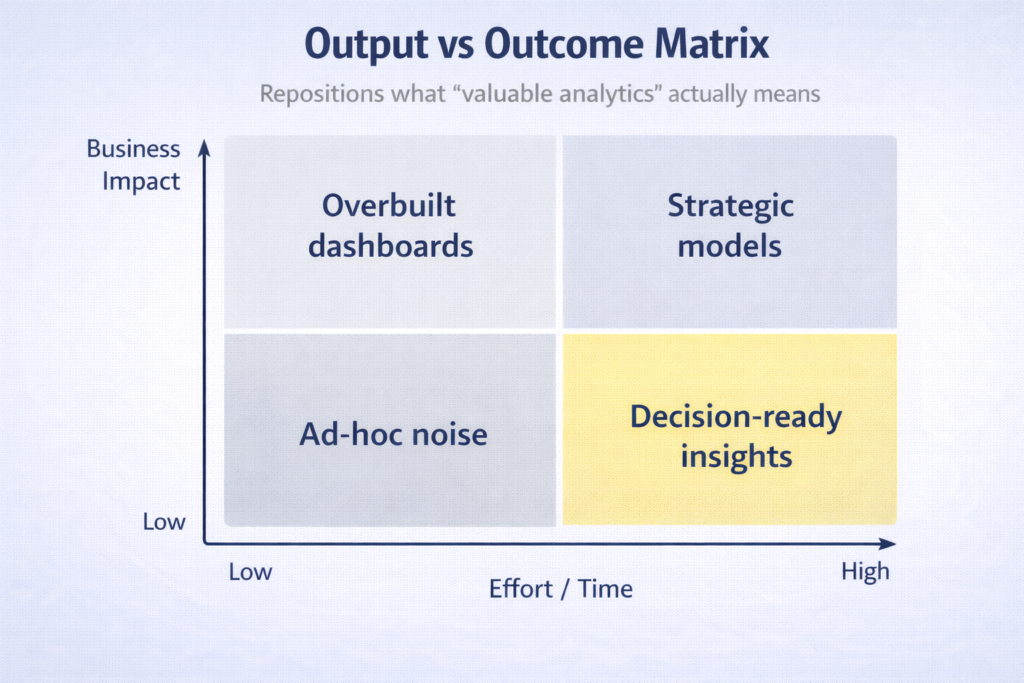

Third, there is an overemphasis on output. Dashboards become the goal instead of decisions. Visuals get refined, filters get added, layouts get debated while the actual business question remains loosely defined.

And finally, there is often no real owner of the decision. Analytics teams operate, business teams review, but no one is accountable for saying: “This is enough. Let’s act.”

Individually, each of these seems reasonable. Together, they stretch timelines from weeks into months.

The Shift That Changes Everything

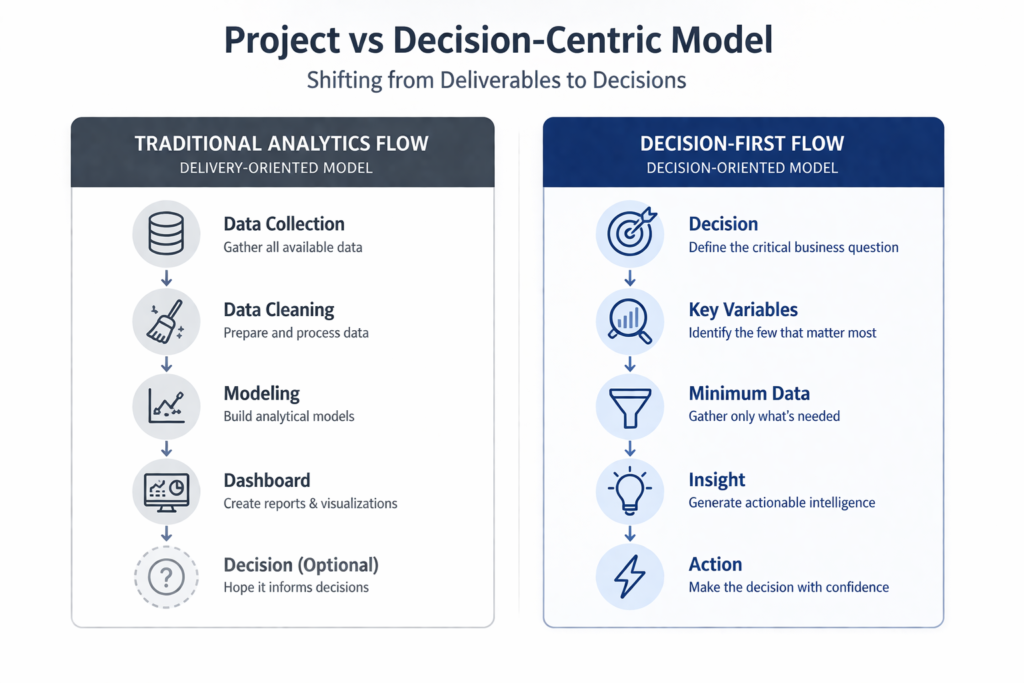

The turning point, in my experience, is deceptively simple.

You stop treating analytics as a project and start treating it as a decision cycle.

That shift changes the entire flow.

Instead of thinking:

Data → Analysis → Dashboard → Maybe a decision

You force the sequence to begin where it should:

Decision → Variables → Minimum data → Insight → Action

This is not just semantics. It is a structural change.

When you start with the decision, everything else becomes constrained in a useful way:

- You know what matters and what doesn’t

- You know how much data is enough

- You know when to stop

Speed is no longer a compromise. It becomes a natural outcome.

What “Weeks, Not Months” Actually Looks Like

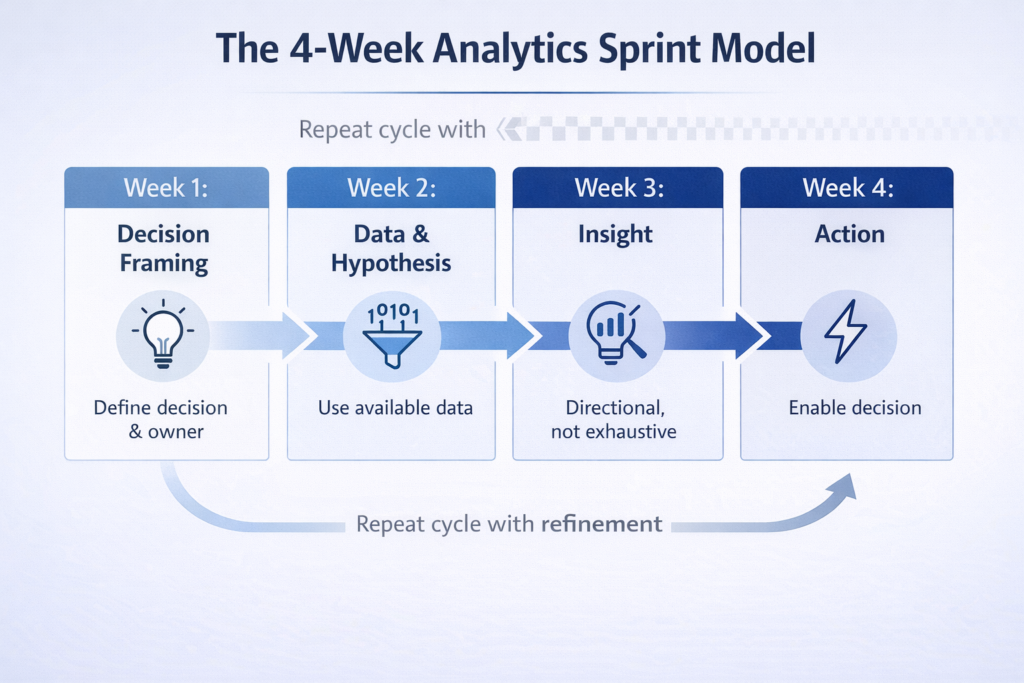

When people hear this, the immediate reaction is: “That sounds ideal, but not practical.”

In reality, it is very practical if you enforce discipline.

A typical analytics cycle can be compressed into a 3–4 week sprint without sacrificing value. What changes is not the effort, but the focus.

Week 1 is about clarity, not data.

You define the decision, the stakeholders and what success looks like. If this is vague, everything that follows will be slow.

Week 2 is about direction, not completeness.

You identify the key variables and pull whatever data is readily available. You don’t wait for perfect data. You start forming hypotheses.

Week 3 is about insight, not exhaustiveness.

You analyze just enough to answer the question directionally. Not every correlation needs to be tested. Not every edge case needs to be covered.

Week 4 is about action, not presentation.

You translate insight into a decision. Even if deployment is partially manual, it moves the organization forward.

The difference is subtle but powerful.

You are no longer building something to be admired. You are enabling something to be used.

What Changes When You Compress Time

Interestingly, shortening timelines doesn’t reduce quality. It changes the definition of quality.

You stop chasing theoretical completeness and start prioritizing relevance.

You become sharper in problem definition because you don’t have the luxury of ambiguity.

You reduce dependency chains – fewer teams, fewer approvals, fewer handoffs.

And perhaps most importantly, you increase the frequency of decisions.

This is where the real value lies.

Organizations don’t become data-driven because they have better models.

They become data-driven because they make more decisions, more often, with data.

Speed enables that.

The Objections That Always Come Up

At this point, there are usually a few familiar pushbacks.

“Our data is messy.”

It always is. Waiting for clean data is often just a way of delaying decisions. In most cases, 70% reliable data today is more useful than 100% reliable data three months later.

“We need to build this in a scalable way.”

Scale is important but not at the beginning. The first goal is to prove that the decision logic works. You can scale something that works. You cannot scale ambiguity.

“Quality will suffer.”

This is worth challenging. Overengineering is often mistaken for quality. True quality, in analytics, is whether the output influences a decision.

“Stakeholders are not aligned.”

Long timelines don’t solve alignment, they hide the lack of it. Short cycles force conversations earlier and more frequently.

What Leadership Needs to Change

This shift cannot happen at the team level alone. It requires a change in what leadership values.

Most organizations, implicitly, reward:

- Completeness

- Accuracy

- Technical robustness

All of which are important, but insufficient.

What often goes unmeasured is decision turnaround time.

How long does it take from identifying a problem to acting on it?

If that metric is invisible, speed will always be deprioritized.

Leaders need to start asking different questions:

- “When will we be able to act on this?”

- “What is the minimum we need to decide?”

- “Can we test this in the next two weeks?”

When those questions become common, behavior changes quickly.

The Real Trade-off

There is a trade-off here, but it is not what most people think.

It is not speed versus quality.

It is timeliness versus precision.

Early insights are directional. They may not capture every nuance.

Late insights are precise, but often disconnected from action.

The mistake is assuming that precision automatically creates value.

In reality, value is created when insight meets timing.

Which is why I often say: In analytics, timing is a dimension of accuracy.

A Final Thought

Most organizations believe they have an analytics capability.

- They have tools.

- They have dashboards.

- They have teams.

But if it takes months to answer a question that drives a decision, what they really have is a bottleneck.

Analytics was never meant to be a reporting function.

It is a decision-enablement function.

And decisions don’t wait.

If your analytics takes months, it is not a sign of sophistication.

It is a sign that the system is optimized for comfort and not for action.

The shift to weeks is not about working faster.

It is about working on the right problem, with the right constraints, at the right time.

And once that shift happens, speed stops being aspirational.

It becomes the default.

Leave a Reply