Most analytics projects don’t fail because the insights are wrong. They fail because organizations lack the structure, incentives and workflows needed to turn those insights into consistent, real-world action.

I’ve seen this pattern play out more times than I can count.

A team spends months building pipelines, cleaning data, designing dashboards, sometimes even deploying sophisticated models. The project is showcased. Leadership nods. Everyone agrees it’s “valuable.”

And then, nothing happens.

No meaningful shift in business outcomes. No sustained change in behavior. The dashboards get opened in a few meetings, then slowly fade into the background.

The project didn’t fail in the way we usually define failure. It didn’t break. It didn’t produce wrong outputs.

It simply died at the last mile.

Over time, I’ve realized that this has very little to do with data quality, tooling or even analytical capability. The real issue is far more structural.

Most analytics projects fail because the insights they generate are not A.L.I.V.E.

What Do I Mean by “A.L.I.V.E.”?

After working across multiple analytics engagements, I started breaking down where exactly things fall apart. The pattern was consistent.

For analytics to create real impact, five conditions need to hold. When even one of them is missing, the last mile starts collapsing.

I call this the A.L.I.V.E. framework:

A — Absorption Capacity

L — Linkage to Workflow

I — Incentive Alignment

V — Validity & Trust

E — Execution Ownership

A — Absorption Capacity: The Most Ignored Constraint

Most organizations don’t have a shortage of insights anymore. They have a surplus.

There are dashboards for sales, operations, finance, customer behavior, supply chain and more. Alerts are generated. Reports are circulated. Metrics are tracked obsessively.

But here’s the uncomfortable reality: No organization has the bandwidth to act on everything it knows.

Managers are already dealing with operational firefighting, stakeholder demands and internal coordination. Adding more insights doesn’t automatically increase their ability to act.

In fact, it often does the opposite.

When too many signals compete for attention:

- Prioritization becomes unclear

- Everything feels important

- Nothing gets executed decisively

This is where many analytics projects quietly lose relevance – not because they are wrong, but because they are competing in an environment with limited attention.

This is fundamentally an execution bandwidth problem, not a data problem.

L — Linkage to Workflow: Where Insights Go to Die

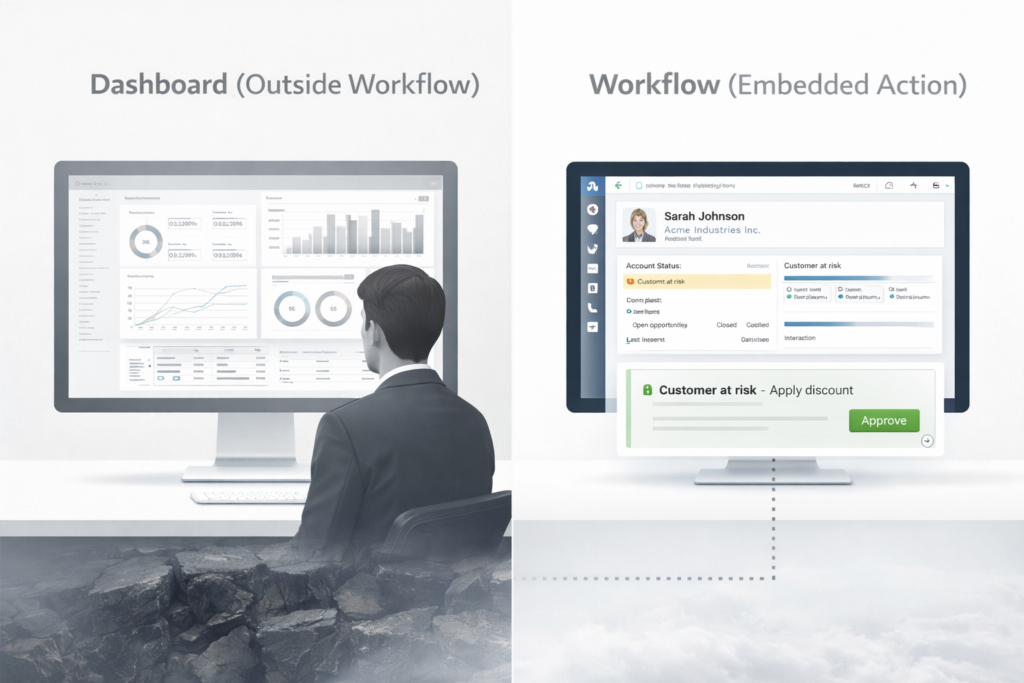

Most insights live in dashboards – tools like Power BI or Tableau.

That, in itself, is the problem.

A dashboard is not an action system. It is an observation system.

To act on an insight from a dashboard, a user has to interpret, decide and then execute elsewhere. That’s a lot of friction.

In reality, most decisions are made inside workflows:

- CRM systems

- ERP transactions

- Approval chains

- Operational routines

If the insight is not part of these workflows, it relies entirely on human intent.

And human intent is unreliable.

Dashboards don’t drive action. Workflows do.

This is where the gap between insight and action becomes visible.

I — Incentive Alignment: The Silent Killer

This is one of the most under-discussed reasons analytics fails.

People don’t ignore insights because they don’t understand them. They ignore them because acting on them is not in their best interest.

Acting introduces risk. Ignoring preserves stability.

From a rational standpoint:

- If acting fails → accountability increases

- If ignored → status quo continues

This is why many organizations face an analytics adoption gap.

It’s not a capability issue. It’s an incentive alignment issue.

Rational people ignore analytics when incentives punish action.

Unless incentives are aligned with outcomes, insights will remain unused.

V — Validity & Trust: Accuracy Is Not Enough

There is a common assumption that if a model is accurate, it will be used.

That assumption does not hold in practice.

Adoption is driven less by statistical performance and more by trust in analytics.

Business users care about:

- Can I explain this?

- Does it align with my experience?

- Has this worked before?

If these answers are unclear, hesitation creeps in.

Even highly accurate models fail if they lack explainability and business confidence.

Adoption is not driven by accuracy—it’s driven by trust.

Without trust, analytics remains theoretical.

E — Execution Ownership: Everyone Sees, No One Acts

Visibility is often mistaken for ownership.

Dashboards are shared. Metrics are reviewed. Insights are discussed.

But when it comes to action, there is ambiguity.

Who is responsible?

This becomes more complex in cross-functional environments:

- Sales vs Finance

- Supply Chain vs Demand Planning

- Marketing vs Operations

This creates an accountability gap.

When ownership is unclear:

- Action is delayed

- Decisions are deferred

- Impact is lost

If everyone sees it, no one owns it.

Strong analytics requires clear execution ownership tied to outcomes.

The Bigger Picture: Why the Last Mile Fails

When you step back, a pattern emerges.

Analytics does not fail at the level of data or models. It fails at the intersection of:

- human behavior

- organizational structure

- operational constraints

This is why the last mile problem persists.

It is not a technology gap. It is an organizational design problem.

A Better Way to Evaluate Analytics

Instead of asking:

- How accurate is the model?

- How advanced is the dashboard?

A better question is:

Is your analytics A.L.I.V.E.?

- Can the organization absorb it?

- Is it embedded into workflows?

- Are incentives aligned?

- Is there trust?

- Is ownership clearly defined?

If the answer to any of these is no, the data to action gap will remain.

Closing Thought

Most organizations don’t struggle with generating insights anymore.

They struggle with making those insights matter.

The real risk is not bad data or poor models. It is the growing pile of analytics that never translates into actionable insights.

That’s where the real failure happens.

Analytics doesn’t fail in dashboards. It fails in execution.

And that is why most analytics projects die at the last mile.

Leave a Reply